SEO Compounds. AI Visibility Needs Momentum.

Most SEO teams are used to a simple model.

You publish useful content, earn links, improve rankings, and over time the work compounds. Pages you published months ago keep driving traffic. Links you earned a year ago still help. If you keep doing solid work, visibility builds.

That model still holds up in organic search.

It just does not fully explain what is happening in AI search.

Systems like AI Overviews and LLM assistants do not only reward what exists. They reward what is still being referenced, and they often seem to prefer sources that feel more current when several options are otherwise good enough.

That changes the job.

You are not only trying to rank.

You are trying to stay in circulation.

What we'll cover:

Why AI visibility doesn’t behave like traditional SEO

Google has already said in its documentation on AI features in Search that these systems can issue multiple related queries and pull from a wider set of sources than a single SERP.

That matters more than people think.

In classic SEO, the goal is obvious. You want one of the blue links. In AI search, the goal is less about winning a position and more about being included in the set of sources the model pulls from while it builds an answer.

That is a different game.

As Louise Linehan writes in Ahrefs’ analysis of AI Overview citation overlap:

"ranking for the user’s exact query is no longer a guarantee of visibility."

That line gets to the heart of it.

A lot of brands are still thinking in rank-first terms. They assume that if they have strong pages, decent authority, and stable organic performance, AI visibility should follow naturally.

Sometimes it does.

Sometimes it really doesn’t.

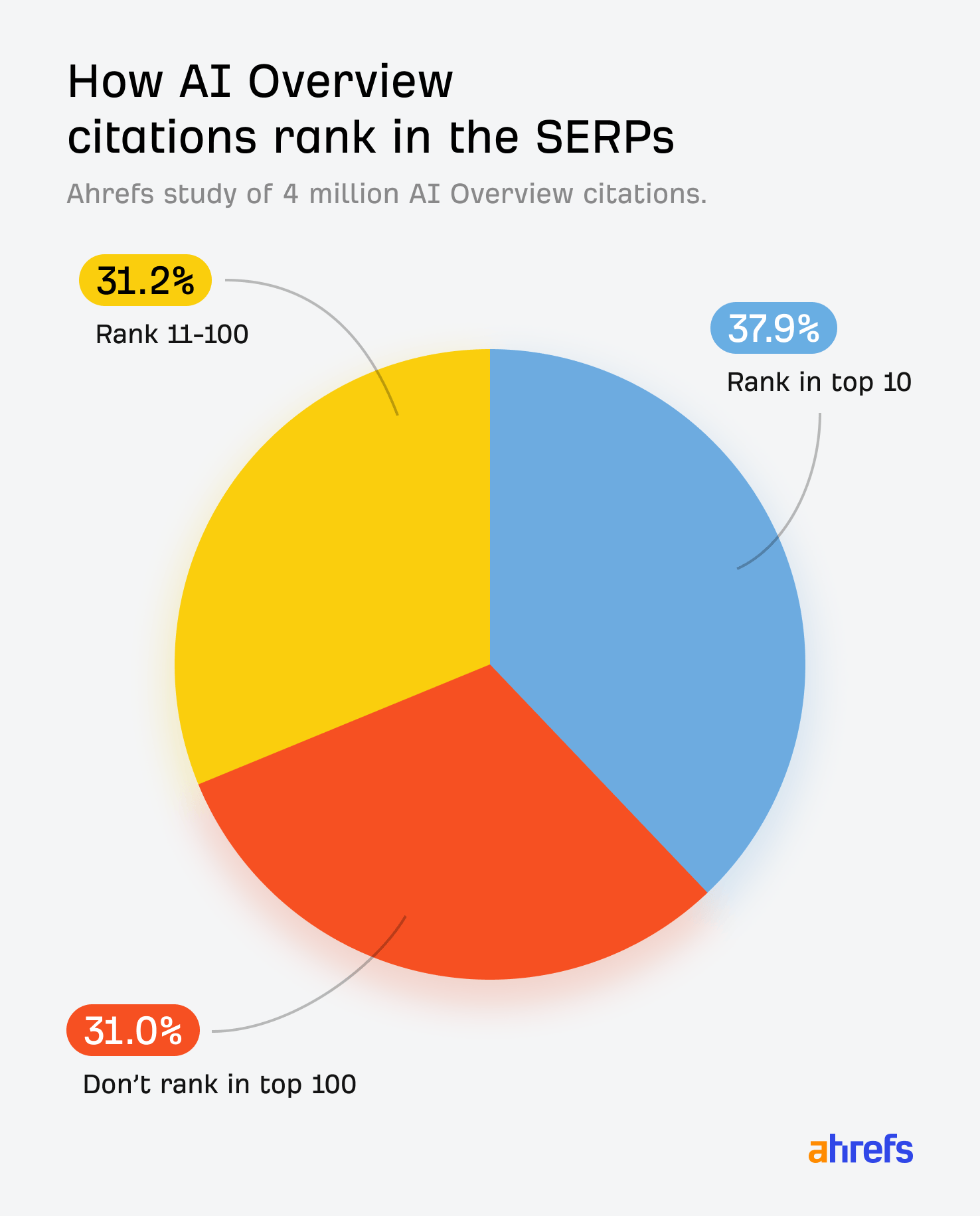

Ahrefs found that only 38% of AI Overview citations come from the top 10 organic results. Earlier studies showed much higher overlap. Even if the exact number moves over time, the direction is clear enough. Ranking still matters, but ranking alone is not a reliable proxy for AI inclusion anymore.

That is why this feels confusing when teams first run into it.

Nothing looks broken.

The site still ranks.

The pages are still live.

Technical SEO may be fine.

But the brand starts showing up less in AI answers because the model is drawing from a broader, more dynamic citation pool.

So the real question is not just, "Do we rank?"

It is also, "Are we still being referenced in the places these systems trust when they build an answer?"

That is where coverage and continuity start to matter.

Coverage means showing up across the kinds of sources AI systems actually cite.

Continuity means those references do not dry up after one campaign or one good quarter.

What the data actually says

Once you look at the research through that lens, the patterns start to line up.

The first pattern is recency.

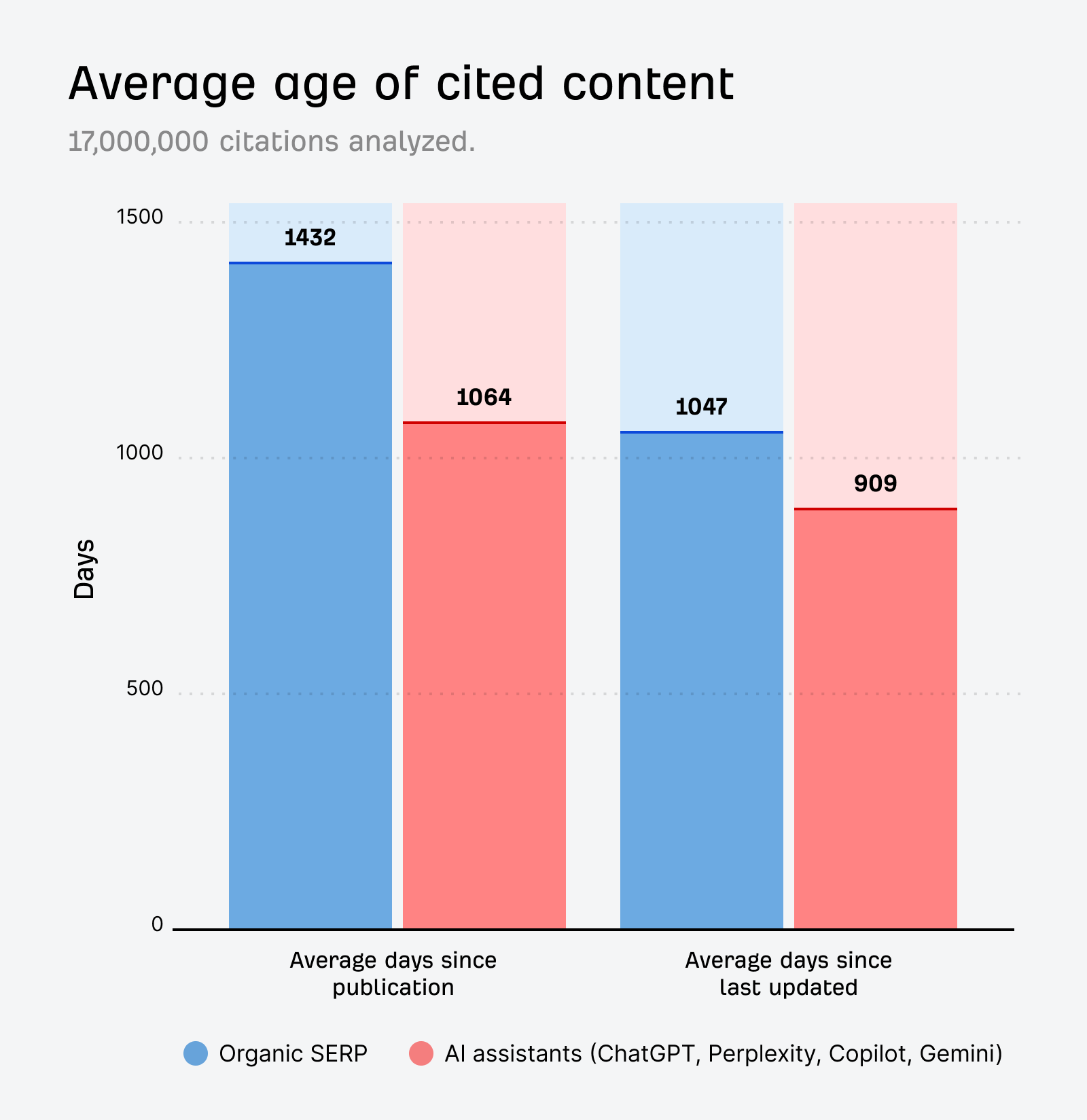

In Ahrefs’ research on fresh content in AI citations, AI assistants cited pages that were, on average, 25.7% fresher than the pages ranking organically for the same queries. Over at Wix’s AI Search Lab, around 65% of LLM bot traffic went to content published within the previous year, and about 79% went to content published within two years.

That does not mean old content is useless.

It means stale content becomes easier to replace.

If your page is still accurate but nothing around it has been refreshed, updated, or reinforced, it has a harder time holding its place when newer sources keep entering the mix.

The second pattern is volatility.

Authoritas’ research on AI Overview volatility found that roughly 70% of AI Overview results changed within two to three months. That is a big clue. It tells you these citation sets are not especially fixed. They move.

Which is exactly what a lot of marketers are seeing in practice.

A brand appears in AI answers for a stretch.

Then the visibility fades.

Not because the company suddenly became irrelevant, but because newer pages, newer mentions, and newer validations entered the system.

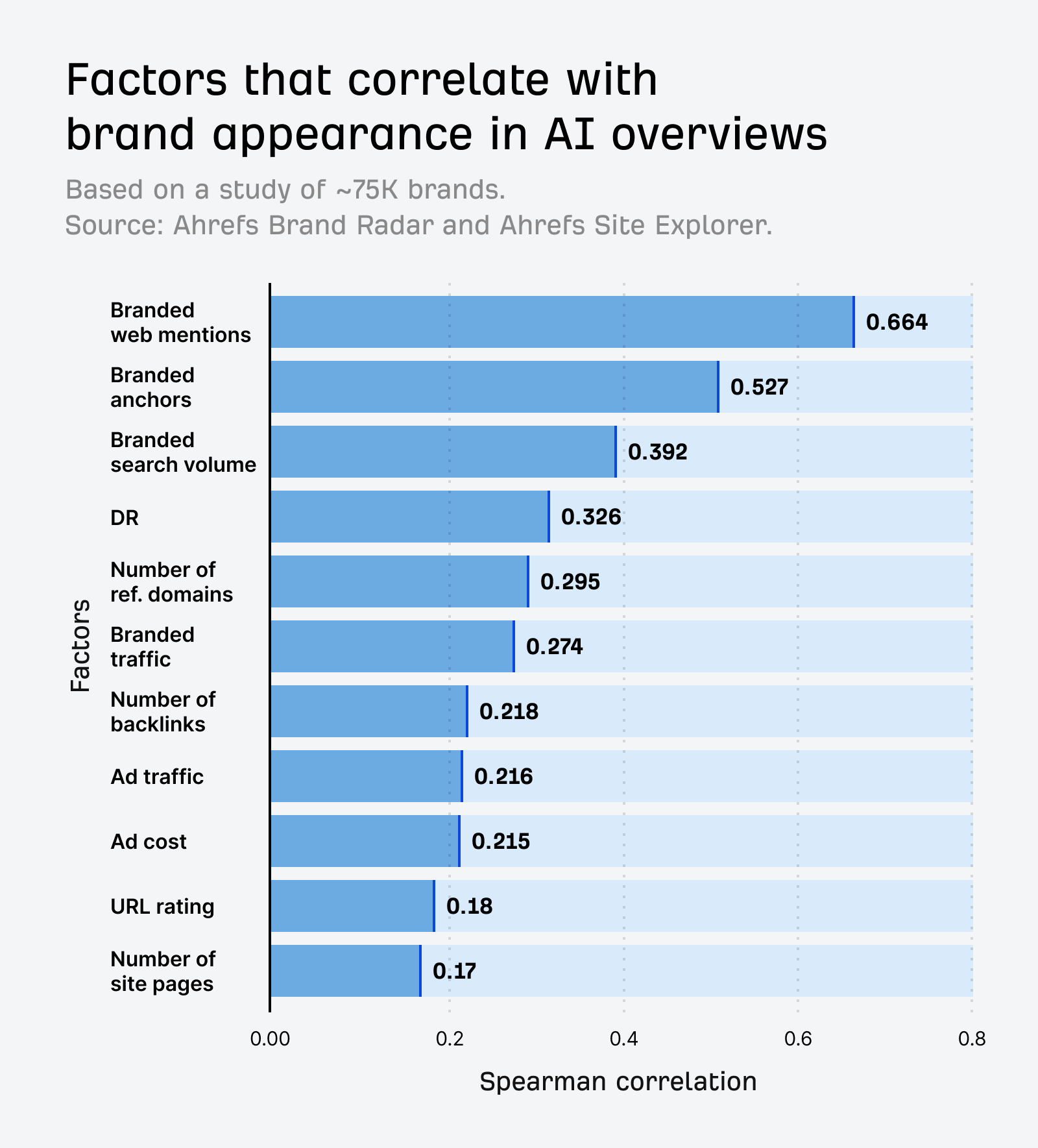

The third pattern is that mentions seem to matter more than many teams expect.

In Ahrefs’ analysis of the relationship between mentions and AI visibility, brand mentions showed a 0.664 correlation with AI visibility. Backlinks, by comparison, showed a 0.218 correlation.

That does not mean links stopped mattering.

It does mean the old habit of evaluating everything through link equity alone is probably too narrow for AI search.

A simpler way to frame it is this: AI visibility looks heavily influenced by how often your brand is referenced across the web, and whether those references are fresh enough to stay competitive.

That also helps explain why third-party buyer-stage content matters so much.

In Wix’s citation analysis, listicles, articles, and product pages made up more than half of citations, and listicles alone accounted for a large share of commercial citations.

That lines up with what most of us already see when people research software.

They do not only land on vendor sites.

They land on best-X pages, alternatives pages, comparison posts, review sites, and category roundups.

Maria Georgieva makes the same point in Search Engine Land’s piece on winning visibility in the AI era:

"visibility depends on being referenced across many platforms and websites."

That is why one strong guide on your own blog is useful, but rarely enough on its own.

It is also why older placements fade.

Not simply because they are old.

Because they are unsupported.

No new listicles mention the brand.

No recent comparison pages reinforce the same positioning.

No fresh reviews or roundups keep the company present in the wider conversation.

Once that happens, recency bias makes those older references easier to replace.

So when teams say, "we were showing up in AI answers a few months ago and now we’re not," the answer is often less dramatic than they expect.

The system moved on because the web moved on.

What SEO teams should do differently

Treat AI visibility like ongoing distribution

The biggest mistake is treating AI visibility like something you win once.

That mindset made sense in a more static SEO environment. Launch a campaign, earn a few strong links or mentions, and let the asset do its job over time.

That still works for part of the equation.

It is just incomplete now.

If AI visibility depends on repeated references and recent reinforcement, then one-off campaigns will naturally fade. They create a spike. Then the market keeps publishing, updating, and comparing, and your brand slowly falls out of the active citation set.

That is why the idea of monthly placements keeps coming up.

Not because someone invented a trendy retainer model.

Because a system that updates this often rewards brands that keep showing up.

Prioritize third-party buyer-stage coverage

A lot of SEO programs are still too site-centric for this environment.

They focus on owned content first, and everything else becomes a bonus.

For AI visibility, buyer-stage third-party coverage is not a bonus.

It is part of the core strategy.

If you sell software, you should know which pages in your category shape market perception. Not just the obvious review sites, but also the comparison articles, the alternatives posts, the independent explainers, the expert roundups, and the category pages that get refreshed regularly.

Then you need to know four things.

- where you are already mentioned

- where competitors are mentioned and you are missing

- which pages are actually updated often enough to matter

- which surfaces tend to get cited when buyers research your category

That is a much better working view of AI visibility than rankings alone.

Because again, this is not only a ranking problem.

It is a presence problem.

Keep buyer-stage pages fresh on your own site

Owned pages still matter. They just matter differently.

The pages most likely to influence AI retrieval are usually the ones closest to decision-making: category pages, solution pages, comparison pages, alternatives pages, and strong commercial explainers.

These are not pages you should publish and ignore for twelve months.

In Semrush’s guide to ranking in AI search, Anj Viray, Head of Marketing at Chargeblast, describes the work as:

"aligning our content strategy with what people are actually asking on AI platforms."

That is the right posture.

Not fake freshness.

Not changing a date in the CMS.

Actual updates.

Better examples.

New screenshots.

Clearer positioning.

Stronger comparisons.

More current information than the pages you are competing against.

If the research on freshness bias holds, then key money pages need a real review cadence. Quarterly is a reasonable starting point for a lot of software companies, especially on high-intent pages. Not because every page needs constant edits, but because stale pages become easier to displace when newer and better-supported alternatives exist.

Measure AI visibility separately from organic search

If you only look at rankings and traffic, AI changes will feel random.

They are not always random.

Sometimes organic rankings hold steady while AI inclusion drops.

Sometimes a competitor is not outranking you in classic search, but they are being cited more often in AI answers because they have stronger third-party coverage and more recent reinforcement.

That means this needs its own layer of measurement.

At minimum, teams should track whether they appear in AI answers for priority commercial queries, which sources get cited, how often competitors appear, and whether their most important off-site mentions are recent.

Search Console still matters.

It just does not tell the whole story here.

The practical takeaway

SEO still compounds.

Strong pages, technical foundations, and durable links still create long-term value.

But AI visibility behaves more like an active distribution layer sitting on top of the web.

If your brand is not being mentioned, refreshed, and reinforced, your inclusion can fade even while your SEO foundations remain solid.

That is the shift.

From rank to inclusion.

From one-off wins to ongoing presence.

From static authority to active validation.

So yes, being referenced matters.

But so does whether those references still look current enough to win a place in the citation set.

That is why this is not just a mentions story.

It is a mentions-plus-recency story.

SEO compounds.

AI visibility doesn’t, unless you keep going.

Frequently Asked Questions

Do rankings still matter for AI visibility?

Yes.

They still help because strong rankings increase the odds that your pages are part of the pool AI systems can draw from. The problem is that rankings no longer explain the whole outcome. As Ahrefs’ AI Overview overlap study showed, many citations come from outside the top 10 results, so a decent ranking does not guarantee inclusion.

Do AI Overviews reduce clicks?

In many cases, yes.

Recent Semrush research on AI Overviews and Pew Research reporting on click behavior when AI summaries appear both point in the same direction: when an AI summary answers enough of the question upfront, fewer people click through.

That does not make visibility irrelevant. It just means teams need to separate citation presence from traffic impact and measure both.

Why does Reddit show up so often in AI answers?

Because it gives AI systems something a lot of brand content does not: direct language, disagreement, first-hand experience, and discussion.

That is one reason Reddit has become one of the most-cited sources across major AI search platforms. In software and product research especially, community content often helps models ground an answer in real user sentiment.

How often should you refresh content for AI visibility?

There is no perfect rule, but high-intent pages should not sit untouched for long stretches.

If a page influences category positioning, product comparisons, or commercial research, a quarterly review is a sensible place to start. The goal is not to manufacture activity. It is to make sure the page stays current enough to compete against newer pages and fresher citations.

How do you track AI visibility if Google Search Console does not show the full picture?

You need a separate workflow.

Start by tracking a set of priority commercial queries and noting whether your brand appears in AI answers, which sources are cited, how often the citation set changes, and which competitors keep showing up. That will give you a clearer operating view than rankings alone, especially when AI visibility moves before traffic does.

Outsource Enterprise SEO - Best Agencies to Choose From

Enterprise SEO Mistakes - Avoid These Traps to Scale Faster

25+ Buyer Queries for AI Prompts & SEO Keyword Tracking

The AI Search Attribution Problem Nobody Has Solved Yet

.png)